Artificial Intelligence – Where are we today?

Dezember 5, 2016

Blogpost from the CAS Digital Finance class on artificial and biological intelligence with Pascal Kaufmann by Giuseppe De Carlo:

Saturday morning, 7.30 am. According to our plan for our CAS in Digital Finance we will deep dive into two topics today, artificial intelligence (AI) and virtual reality (VR). Personally, I am very excited and can’t wait until the lesson begins. I enter the classroom, a young speaker welcomes me, the beamer is already on, I have a look at the screen and the projected slide already meets my expectations:

After a short introduction Pascal Kaufmann (founder and CEO of Starmind) reveals us that he would love to have the same technology used in the “Matrix”, that allows to acquire any kind of knowledge by just uploading it directly into the brain. However, in order to reach that level, we will need to talk about the foundation of neuroscience first.

The heading of the second slide, brings us back to reality: “Neuroscience crash course” – Wait a minute, what?! Pascal is really stating that he will condense his seven years’ studies at ETH Zurich into two hours? Now we are definitely in the movie, I start feeling like Neo when he is offered the red and the blue pill by Morpheus. Ok, let’s stay in “Wonderland”, let’s follow Pascal and “see how deep the rabbit-hole is”, let’s choose the red pill. As a mere mortal, I will try my best to reflect what we went through in Pascal’s crash course.

The scientific journey for developing AI evolves over three steps:

The basic of neuronal networks are neurons. Neurons consists of a cell body, dendrites and axons. Neurons ceaselessly scan their environment for chemical gradients and for other neurons to connect. If a neuron cannot connect to other neurons it dies (no worries, we produce daily around 80’000 new neurons, no matter how old we are). The scanning is nothing else as the growing of the axons. The basis of the axons growth lies within the extension of the microtubule inside the neuron.

These microtubules behave like an ant colony, each ant is programmed to search nutrition and/or follow a pheromone trace, once they found something eatable, they “take a bite” and release a pheromone trace, so that the other members of the colony know where the food can be found.

Axons connect to dendrites over synapses. A neural signal is passed from cell body to cell body by passing through the axon dendrite connection.

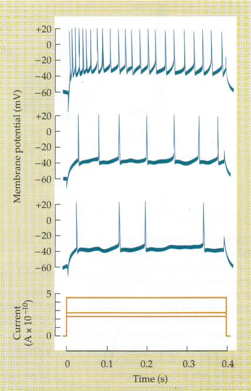

The signal can be measured with a glass electrode. The strength of the signal is measured in milliampere (mA) and it is 0.2 mA. Stimulating the cell with a stronger signal results in a higher frequency not in a higher voltage.

So far so good, but how does the brain learn? How does it store information? As Pascal’s says, we know little over the brain about 10% is explored and the residual 90% is magic:

So far so good, but how does the brain learn? How does it store information? As Pascal’s says, we know little over the brain about 10% is explored and the residual 90% is magic:

“The mechanisms of Learning and Memory is still a very controversial and extensively treated issue in Neuroscience. “

“There is not yet any conclusive evidence for any theory suggested. “

“However, activity dependent plastic changes are still the best candidate for Learning and Memory. “

“There is strong empirical support that synapse specific changes accompany the learning process. “

(Kandel et al. (2000) “Principles of Neural Science”, New York: McGraw-Hill.)

In fact, stimulating a neuron, leads to a potentiation of the synaptic connections, not stimulating it results in reduction of the synaptic connections and on the long term on its dead:

The characteristic of neurons to change in order to process signals more efficiently is known as neural plasticity.

The following neuronal interfacing experiment has proven that the reaction of a brain can be forecasted and that a brain works no matter if the stimulus is artificial or natural.

The idea consists in connecting a fish brain to a robot. The optic nerve is cut from the eyes and connected to the robots’ camera which provides the stimulus for the brain (input) to react. The spinal cord is also cut and connected with the bot. The spikes measured at the end of the spinal cord are transformed in action signals for the bot (output).

The bot was stimulated by light beams and the expected reaction was that it would move in a forecasted direction. Following scenarios have been tested:

A camera that was placed over the bot filmed the bot’s movements (slide left). The slide on the right has two sets of graphs, which represent the movements of the bot. The graphs on top are the expected results (simulated by a computer). The graphs at the bottom show the recorded movements of the bot by the camera.

This and others experiments show that we are at the very beginning of understanding how a brain works, these are first steps in order to create an artificial neuronal network and although science and technology have made huge steps forward it is still a long way to go.

So, where do we stand today with AI? If we talk about artificial intelligence, we should first define what intelligence is. So Pascal asks the audience, what is its understanding of intelligence. Already the replies to that question show that there is not a common understanding of what intelligence is; Is somebody who is good at chess intelligent? Or does he have a particular skill in chess playing only? What would you say about a kid being good at chess? What about a machine that can play chess (and beat the best human chess player)? Would you say the machine is intelligent? We all agree that intelligence is more, it involves movement a large variety of behaviours, adaptivity, skills etc…”intelligence requires a body”

Although machine learning is developing, the bottleneck for transforming information into knowledge has some parallels with the Middle Ages. 700 years ago, the access to information was very poor and limited circle of individuals (almost monks and nobility). Copying a bible lasted years and required particular skills, when finished only a limited group of people was able to access to this knowledge. Today the challenge for AI is to filter from a huge amount of information what is of relevance on the actual context and transform it into knowledge.

After having seen the short film “Humans needs not apply” we started the discussion in the classroom. What does the class think of AI?

Opinions vary from “this is a further automation step, a branch will lose jobs but there will be new branches and new jobs compensating this” to “we will lose about 30% of the jobs forever”, to the more dramatic opinion indicating this as a step in human evolution where at the end machines take over and the human race will extinct. However, we do not know yet where this journey will lead. Maybe it could develop this way:

It starts with a fish brain connected to a robot, it continues with artificial networks that are capable to bundle knowledge with the use of devices (see the Starmind Brain), devices will then more and more be integrated, first in our clothing (wearables) on a later stage as part of our bodies (exoskeletons, lenses connected to the internet etc.) making us to cyborgs and on a later stage AI will surround us. Humans will then have a lot of leisure time as AI will do all the work for us unless, according to Jürgen Schmidhuber’s theory, AI will get rid of us and leave the planet earth to conquer the universe. In that sense…Welcome to the MATRIX!

Bleibe auf dem Laufenden über die neuesten Entwicklungen der digitalen Welt und informiere dich über aktuelle Neuigkeiten zu Studiengängen und Projekten.